Geotagging Photos at SFO Museum, Part 1 – Setting the Stage

This is the first of a multi-part blog post (11 in all!) about geotagging photos in the SFO Museum collection. It’s also a blog post about how we’re doing that work and why we’re taking a longer road than we might otherwise to get there. Over the course of the next couple weeks we’ll post one short blog post a day focused on a specific step, or area of concern, in that process. This first blog post will set the stage and outline some of our motivations for seeing geotagging photos in our collection as a chance to address larger issues in the cultural heritage sector.

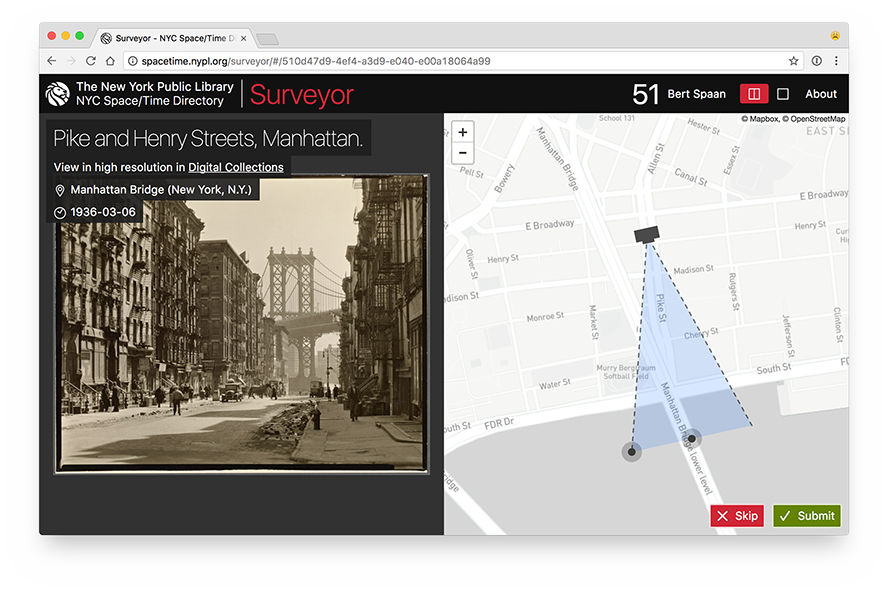

A few years as part of their Space/Time Directory project, the New York Public Library (NYPL) released the Surveyor web application, a tool that allows individuals to geotag the photos in the NYPL collection.

All of the code for the Space/Time Directory is open source software including the Leaflet.GeotagPhoto extension to the Leaflet.js map library. The extension provides a handy interface for not just indicating the location where a photo was taken but also its field of view.

Adding the Leaflet.GeotagPhoto extension to the Mills Field and implementing controls to limit access to staff would have been pretty easy and pretty fast. But geotagging photos (images, really) is something that lots of people, and institutions, want to be able to do. This felt like an opportunity to develop a standalone and extensible tool that does one and only one thing: Geotag images independent of where those images come from or where the newly created data goes to.

The larger motivation for doing things this way is to build on work we described in the Using the Placeholder Geocoder at SFO Museum and to attempt to address an evergreen subject in the cultural heritage sectotor: The lack of common software tools, and the challenges of integrating and maintaining those infrastructures, across multiple institutions.

My own feeling is that many past efforts have failed because they tried to do too much. It’s a bit of a simplification but a helpful way to think about these tools is to imagine they consist of three steps:

- Data comes from somewhere. For example, an image comes out of a database and an asset management system.

- Something happens to that data. For example, an image is geotagged.

- The data then goes somewhere else. For example, the geotagging data goes in to a database.

The problem with many tools, developed by and for the sector, has been that they spend a lot of time and effort to abstract the first and the last points and ultimately fail. The nuances, details and limitations, not to mention the vast inequalities, in all the different technical infrastructures within the cultural heritage sector are legion. Developing an abstraction layer for the retrieval and publishing of cultural heritage materials that attempts to integrate and interface directly with an institution’s technical scaffolding is going to be a challenge at best and a fool’s errand at worst.

Recent efforts like the International Image Interoperability Framework (IIIF) have made good strides to defining a common way to talk about retrieval and publishing between disparate systems but, importantly, leave the actual implementation details to individual systems. IIIF describes a way that systems should speak to each one another but doesn’t try to do any of the talking itself.

Rather than automating where the data comes from, or goes to, we would be better served as a sector to focus on shared tools that automate what can happen to data and making it both easy to ingest and export in the process.

The cultural heritage sector needs as many of these small, focused, tools as it can produce. It needs them in the long-term to finally reach the goal of a common infrastructure that can be employed sector-wide. It needs them in the short-term to develop the skill and the practice required to make those tools successful. We need to learn how to scope the purpose of and our expectations of any single tool so that we can be generous of, and learn from, the inevitable missteps and false starts that will occur along the way.

A good example of this is the way the Leaflet.GeotagPhoto extension was designed and released indepedent of the Surveyor application itself. While another institution might want to run their own instance of Surveyor it’s really an application informed by and designed for the specific needs of NYPL. But that doesn’t prevent them from building another application on top of the Leaflet.GeotagPhoto plugin.

It is with that spirit in mind that we set out to build a simple and extensible stand-alone web application for geotagging images. Ultimately, as you’ll see in the blog posts to follow, this is an application designed by and for SFO Museum but we hope we’ve done things in a way that allows another institution to adapt and rearrange its core building blocks to suit their own needs.

In closing, here are some screenshots of the result of this work to date. They show the same field of view for an image of the main terminal building under construction, in 1953, ten years before and three years after the photo was a taken.

As you can see the field of view for this photo is a bit short. It should extend all the way to Sweeney Ridge to the West of the airport but insteads stop at the Terminal building. We’ll cover updating and fixing geotagged photos in a later blog post.